The proliferation of AI allows it to enter any sector the technology desires. Its versatility has penetrated the United States armed forces, and military-grade AI is distinct from casual generative usage or how a manufacturing plant employs it. It’s vital to distinguish what sets it apart for technological development. The traits denote how AI adapted to military standards.

What Defines Military-Grade AI?

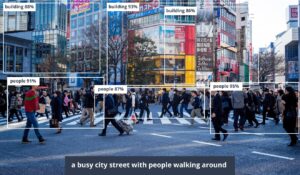

AI worthy of military implementation encompasses two facets — intentions and specifications. Military-grade AI aims to protect nations by gathering knowledge and data about adversaries. The information is collected at speed outpacing human ability, promising higher security and accuracy.

AI expedites undergoing investigations and streamlines preparation for troubling scenarios, resulting in higher productivity.

“Military-grade AI is helpful on and off the battlefield for offensive and defensive purposes.”

Another utilization is through autonomous weaponry. It integrates into weapons or related control systems. Military AI tools are not for commercial sale or authorized for private use. Their dangerous properties must be behind governmental lock and key and have strict prerequisites for approving operators.

The armed forces cannot use AI that fails to meet standards. Military technology must be resilient and robust. Although it will rest safely in climate-controlled places like the Pentagon, it will also travel to humid, turbulent environments. Companies must make specifically designed military-off-the-shelf tech incorporating AI in all standards, from aircraft to naval.

Military AI must have safeguards preventing users from exploiting citizens. Technology must incorporate the most updated cybersecurity practices for national security and defense. Otherwise, AI leaves more backdoors for threat actors than it protects against. For example, when using it to scan satellite images of potential battlefields, geolocation data must remain safe from hackers and unauthorized parties.

The characteristics shape AI expectations for the United States military. Staff must adjust their job descriptions, pursue more digital literacy training and understand the importance of data governance in novel digital infrastructure. Is AI effective in the ways it’s currently in operation?

Marketing to Corporate Employers

The qualifier “military-grade” has become a marketing term. Employees worldwide want the benefits of AI, especially as workers go remote. Bottom lines hinge on accountability and trust, and enterprises want failsafes and management tools to keep employees in line.

The same technology militaries use for spyware is translatable to workplace oversight. Also known as bossware, the programs take screenshots, monitor worker productivity and determine growth potential.

Bossware companies may not be military-focused, but the surveillance technology remains similar. The software-as-a-service disguises itself as proactive employee engagement, but some call it reputation management or insider threat assessment, minimizing trust between employees and managers. The services have a similar potential to uncover terror as it does to deter employees from unionizing.

Using military-grade AI this way leads to ethical questions, such as:

- How will regulators respond to this monitoring when the tech should not harm or upset citizens?

- How safe are individuals and their data?

- Is the severity of military AI too much to playtest before adequate research is available?

- Are there ethical repercussions for manipulating military-style technology on such a commercial scale?

- Is this a human rights issue more than a national security concern?

It is too soon to unpack, but regulatory bodies must discuss concerns to get ahead of the narrative. Otherwise, it may result in civil unrest despite the intention of AI to protect the nation.

Generating Responses to Global Crises

The Pentagon and the Department of Defense are taking generative AI to the next level. While laypeople ask ChatGPT to write poems and tell them jokes, the DOD wants to experiment with generating solutions to global issues. Bureaucratic processes require establishing meetings, creating presentations and going through multiple chains of command to approve national action. What if AI hastens the preamble?

Experiment details are top secret, but results suggest crafting the United States military’s response to an escalating problem could take 10 minutes instead of several weeks. Officials leverage large language models informed with confidential information to see how well it constructs actionable, practical ideas.

As with all military-grade AI, there are evident concerns in practice. Generative AI is prone to hacking, in which cybercriminals contaminate data sets to increase bias or the likelihood of hallucinations.

“AI may pose a rational plan one day, and the next, it sneaks in malware or unintelligible nonsense. ”

One AI model has a data set with 60,000 pages of Chinese and United States documentation, which could choose the victor in a potential war. However, unbalanced information skews results, especially without the proper oversight.

Competing in the AI Arms Race

The most likely usage of military AI is as weaponry. Citizens fear it has a path similar to the atomic bomb after its inception — capable of instigating worldwide conflict, but this time, it is autonomous or remotely operated. Tests demonstrate AI’s capability to drop bombs on battlefields analyzed as a grid with relative accuracy. The more the U.S. armed forces practice with the settings, the more likely attacks will unfold without intervention.

Intricate programming causes an AI-powered missile to fire while the entire crew is asleep in bed because the environmental conditions meet the parameters. The United States attempts to maintain relevance, but Russia and China’s competitive mindset incites tensions.

It is to the point where military-grade AI in autonomous arms may be banned internationally. These systems can become uncontrollable by human operators. Governments must discuss the reality of this war-reckoning weaponry in the coming years.

Open-source AI can grab as much data and make the technology as accessible as possible. However, it is harder to instate blanket bans, even on military-grade AI weaponry. Too many parties have access to this tech, and taking it away would be impossible.

How the U.S. Is Using Artificial Intelligence

U.S. implementation of AI for military purposes inspires global usage. It must consider itself a thought leader in this sector, as defense resources and budgets are higher than other nations. The country has the potential to innovate and use military-grade AI in ways the world has never seen — for better and for worse.

Exercising caution is crucial for ethical implementation, alongside scrutinized vetting for third-party vendors and internal operators. The priority for military AI must be increasing safety, and if this trajectory continues, the world will take notice.