For the past twenty years, artificial intelligence (AI) has been emerging as a mega trend that impacts all sectors. In 2020, investment in AI startups exceeded $40 billion, which was an increase of 9.3% from 2019. On the other hand, 87% of AI projects fail, and many factors cause this.

To make an effective AI-driven application requires innovative thinking about all the project components, including application development, staging, deployment, and integration with other applications.

“Coding is not the only challenge for AI engineers. To build AI-powered applications requires a complex IT environment with a myriad of tools.”

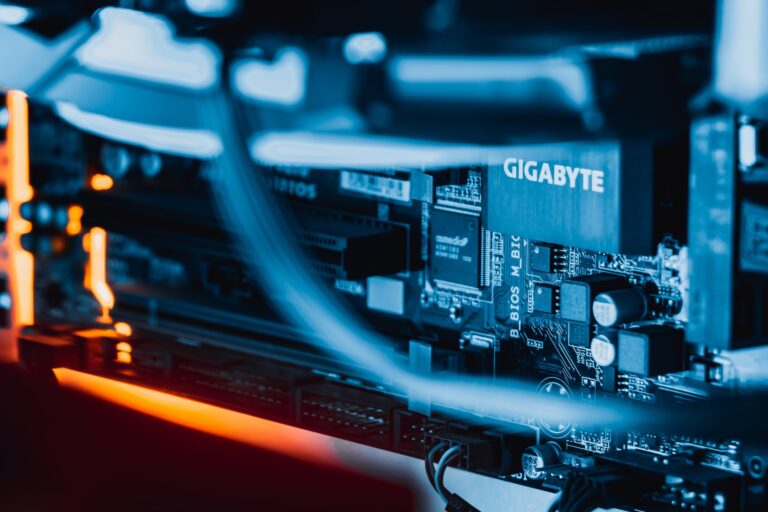

Since enterprise AI systems work with many data types, transferring data from platform to platform is challenging. AI computations require significant computational resources. Managing the infrastructure is expensive and subject to limitations for fast-growing projects.

AI projects may encounter a vendor lock-in agreement, such as when a project must use a single cloud provider. Challenges may arise if the vendor increases prices or starts experiencing increased downtime. In such instances, the AI project might be improved by moving to a different vendor. Still, this could be difficult due to the cost of the move, contractual constraints, or technical issues.

Kubernetes provides a solution because AI algorithms must scale to be optimally effective.

What is Kubernetes?

Kubernetes is featured prominently in technology news. The developer community first learned about Google’s open-source Kubernetes platform in 2015. Kubernetes runs and coordinates containerized applications across clustered servers. The platform manages the life cycle of containerized applications and services with scalable methods that support high availability.

What is Containerization?

Containerization runs an application on an operating system in a way that is isolated from the rest of the system. The application runs as if it has its own instance of the operating system; yet, there may be many containers running on the same operating system.

Containers allow easy distribution and application re-use along with the infrastructure needed for them.

AI requires many coordinated software components and expensive graphics processing units (GPUs) to accelerate AI machine learning and model training.

When an AI system is tasked to work with a high load that is uneven, Docker Swarm can optimize infrastructure optimization manually. Kubernetes does this automatically.

Kubernetes works to coordinate all applications and computer resources management as the Orchestrator that automates deployment, management, scaling, and the containers’ networking.

Case study: A Kubernetes-orchestrated AI Project

This case study is about a video surveillance and security system deployed in a smart office. The system applications include a front end, a back end, WebRTC video streaming, and an AI-based feature for video processing.

In short, AI-assisted video processing may be thought of as a series of consecutive processes, which are:

1) Decoding

2) AI computation

3) Encoding

AI computation is used for face recognition, facial mask wearing detection, or thermal screening. All of these processes require significant computing resources, especially in the case of real-time processing.

If the system’s high load curve is volatile on an hourly, daily, weekly, or seasonal basis, automated computer resources management is needed. When a new video processing request appears, the back end auto-scales with the help of Kubernetes API and automatically adds more servers to process the request. So, Kubernetes works as an Orchestrator for auto-scaling and providing real-time computer resources optimization.

The Future of Kubernetes in AI development

The pandemic in 2020 forced every business to react quickly to unexpected changes. Kubernetes made solutions available built on the cloud-native system to accelerate the software development pace while also enabling flexible data use with modern applications.

The scalability and distributed architecture of Kubernetes is the perfect choice for AI projects. The maturation of these solutions makes 2021 a year to expect more growth in this exciting AI development arena.